- An example Apache Software License 1.1 file This is the original Apache License which applies only to very old versions of Apache packages (such as version 1.2 of the Web server).

- Apache Lucene TM 7.1.0 Documentation. Lucene is a Java full-text search engine. Lucene is not a complete application, but rather a code library and API that can easily be used to add search capabilities to applications. This is the official documentation for Apache Lucene 7.1.0.

Published 24 Jul 2020

ByThe Apache Arrow PMC (pmc)

Welcome to Apache Axis2/Java. Apache Axis2™ is a Web Services / SOAP / WSDL engine, the successor to the widely used Apache Axis SOAP stack. There are two implementations of the Apache Axis2 Web services engine - Apache Axis2/Java and Apache Axis2/C.

The Apache Arrow team is pleased to announce the 1.0.0 release. This coversover 3 months of development work and includes 810 resolved issues from100 distinct contributors. See the Install Page to learn how to get thelibraries for your platform.

Despite a “1.0.0” version, this is the 18th major release of Apache Arrow andmarks a transition to binary stability of the columnar format (which wasalready informally backward-compatible going back to December 2017) and atransition to Semantic Versioning for the Arrow software libraries.

The release notes below are not exhaustive and only expose selected highlightsof the release. Many other bugfixes and improvements have been made: we referyou to the complete changelog.

1.0.0 Columnar Format and Stability Guarantees

The 1.0.0 release indicates that the Arrow columnar format is declared stable,with forward and backward compatibility guarantees.

The Arrow columnar format received several recent changes and additions,leading to the 1.0.0 format version:

The metadata version was bumped to a new version V5, indicating anincompatible change in the buffer layout of Union types. Allother types keep the same layout as in V4. V5 also includes format additionsto assist with forward compatibility (detecting unsupported changes sent byfuture library versions). Libraries remain backward compatible with datagenerated by all libraries back to 0.8.0 (December 2017) and the Java and C++libraries are capable of generating V4-compatible messages (for sending datato applications using 0.8.0 to 0.17.1).

Dictionary indices are now allowed to be unsigned integers rather than onlysigned integers. Using UInt64 is still discouraged because ofpoor Java support.

A “Feature” enum has been added to announce the use of specific optionalfeatures in an IPC stream, such as buffer compression. Thisnew field is not used by any implementation yet.

Optional buffer compression using LZ4 or ZStandard was added to the IPCformat.

Decimal types now have an optional “bitWidth” field, defaulting to 128.

This will allow for future support of other decimal widthssuch as 32- and 64-bit.The validity bitmap buffer has been removed from Union types. The nullity ofa slot in a Union array is determined exclusively by the constituent arraysforming the union.

Integration testing has been expanded to test for extension types andnested dictionaries. See the implementation matrix for details.

Community

Since the last release, we have added two new committers:

- Liya Fan

- Ji Liu

Thank you for all your contributions!

Arrow Flight RPC notes

Flight now offers DoExchange, a fully bidirectional data endpoint, in additionto DoGet and DoPut, in C++, Java, and Python. Middlewares in all languages nowexpose binary-valued headers. Additionally, servers and clients can set ArrowIPC read/write options in all languages, making compatibility easier with earlierversions of Arrow Flight.

In C++ and Python, Flight now exposes more options from gRPC, including theaddress of the client (on the server) and the ability to set low-level gRPCclient options. Flight also supports mutual TLS authentication and the abilityfor a client to control the size of a data message on the wire.

C++ notes

- Support for static linking with Arrow has been vastly improved, including theintroduction of a

libarrow_bundled_dependencies.alibrary bundling allexternal dependencies that are built from source by Arrow’s build systemrather than installed by an external package manager. This makesit significantly easier to create dependency-free applications with alllibraries statically-linked. - Following the Arrow format changes, Union arrays cannot have a top-levelbitmap anymore.

- A number of improvements were made to reduce the overall generated binarysize in the Arrow library.

- A convenience API

GetBuildInfoallows querying the characteristics of theArrow library. We encourage you to suggest any desired addition to thereturned information. - We added an optional dependency to the

utf8proclibrary, used in severalcompute functions (see below). - Instead of sharing the same concrete classes, sparse and dense unions nowhave separated classes (

SparseUnionTypeandDenseUnionType, as well asSparseUnionArray,DenseUnionArray,SparseUnionScalar,DenseUnionScalar). - Arrow can now be built for iOS using the right set of CMake options, thoughwe don’t officially support it. See this writeup for details.

Compute functions

The compute kernel layer was extensively reworked. It now offersa generic function lookup, dispatch and execution mechanism. Furthermore, newinternal scaffoldings make it vastly easier to write new function kernels, withmany common details like type checking and function dispatch based on typecombinations handled by the framework rather than implemented manually by thefunction developer.

Around 30 new array compute functions have been added. For example,Unicode-compliant predicates and transforms, such as lowercase and uppercasetransforms, are now available.

The available compute functions are listed exhaustively in the Sphinx-generateddocumentation.

Datasets

Datasets can now be read from CSV files.

Datasets can be expanded to their component fragments, enabling fine grainedinteroperability with other consumers of data files. Where applicable, metadatais available as a property of the fragment, including partition information and(for the parquet format) per-column statistics.

Datasets of parquet files can now be assembled from a single _metadata file,such as those created by systems like Dask and Spark. _metadatacontains the metadata of all fragments, allowing construction of a statistics-aware dataset with a single IO call.

Feather

The Feather format is now available in version 2, which is simply the ArrowIPC file format with another name.

IPC

By default, we now write IPC streams with metadata V5. However, metadata V4can be requested by setting the appropriate member in IpcWriteOptions. V4 aswell as V5 metadata IPC streams can be read properly, with one exception: a V4metadata stream containing Union arrays with top-level null values will refusereading.

As noted above, there are no changes between V4 and V5 that breakbackwards compatibility. For forward compatibility scenarios (where you need togenerate data to be read by an older Arrow library), you can set the V4compatibility mode.

Support for dictionary replacement and dictionary delta was implemented.

Parquet

Writing files with the LZ4 codec is disabled because it produces filesincompatible with the widely-used Hadoop Parquet implementation. Support willbe reenabled once we align the LZ4 implementation with the special bufferencoding expected by Hadoop.

Java notes

The Java package introduces a number of low level changes in this release.Most notable are the work in support of allocating large arrow buffers andremoving Netty from the public API. Users will have to update theirdependencies to use one of the two supported allocators Netty:arrow-memory-netty or Unsafe (internal java api for direct memory)arrow-memory-unsafe.

The Java Vector implementation has improved its interoperability havingverified LargeVarChar, LargeBinary, LargeList, Union, Extension typesand duplicate field names in Structs are binary compatible with C++ and thespecification.

Python notes

The size of wheel packages is significantly reduced, up to 75%. One sideeffect is that these wheels do not enable Gandiva anymore (which requires theLLVM runtime to be statically-linked). We are interested in providing Gandivaas an add-on package as a separate Python wheel in the future.

The Scalar class hierarchy was reworked to more closely follow its C++counterpart.

TLS CA certificates are looked up more reliably when using the S3 filesystem,especially with manylinux wheels.

The encoding of CSV files can now be specified explicitly, defaulting to UTF8.Custom timestamp parsers can now be used for CSV files.

Filesystems can now be implemented in pure Python. As a result,fsspec-based filesystems can nowbe used in datasets.

parquet.read_table is now backed by the dataset API by default, enablingfilters on any column and more flexible partitioning.

R notes

The R package added support for converting to and from many additional Arrowtypes. Tables showing how R types are mapped to Arrow types and vice versa havebeen added to the introductory vignette, and nearly all types are handled.In addition, R attributes like custom classes and metadata are now preservedwhen converting a data.frame to an Arrow Table and are restored when loadingthem back into R.

For more on what’s in the 1.0.0 R package, see the R changelog.

Ruby and C GLib notes

The Ruby and C GLib packages added support for the new compute functionframework, in which users can find acompute function dynamically and call it. Users don’t need to wait for a CGLib binding for new compute functions: if the C++ package provides anew compute function, users can use itwithout additional code in the Ruby and C GLib packages.

The Ruby and C GLib packages added support for Apache ArrowDataset. The Ruby package provides a new gem for Apache Arrow Dataset,red-arrow-dataset. The C GLib package provides a new module forApache Arrow Dataset, arrow-dataset-glib. They just have a fewfeatures for now but we will add more in future releases.

Apache Disable Http 1.0

The Ruby and C GLib packages added support for reading only thespecified row group in an Apache Parquet file.

Ruby

The Ruby package added support for column level compression in writingApache Parquet files.

Apache Http1.1

The Ruby package changed the Arrow::DictionaryArray#[] behavior. It nowreturns the dictionary value instead of the dictionary index. This is abackwards-incompatible change.

Rust notes

- A new integration test crate has been added, allowing the Rustimplementation to participate in integration testing.

- A new benchmark crate has been added for benchmarking performanceagainst popular data sets. The initial examples run SQL queries againstthe NYC Taxi data set using DataFusion. This is useful for comparingperformance against other Arrow implementations.

- Rust toolchain has been upgraded to 1.44 nightly.

Apache Http 1.0 Password

Arrow Core

- Support for binary, string, and list arrays with i64 offsets to supportlarge lists.

- A new sort kernel has been added.

- There have been various improvements to dictionary array support.

- CSV reader enhancements include a new CsvReadOptions struct and supportfor schema inference from multiple CSV files.

- There are significant (10x - 40x) performance improvements to SIMDcomparison kernels.

DataFusion

- There are numerous UX improvements to LogicalPlan and LogicalPlanBuilder,including support for named columns.

- General improvements to code base, such as removing many uses of

Arcand using slices instead of&Vecas function arguments. - ParquetScanExec performance improvement (almost 2x).

- ExecutionContext can now be shared between threads.

- Rust closures can now be used as Scalar UDFs.

- Sort support has been added to SQL and LogicalPlan.

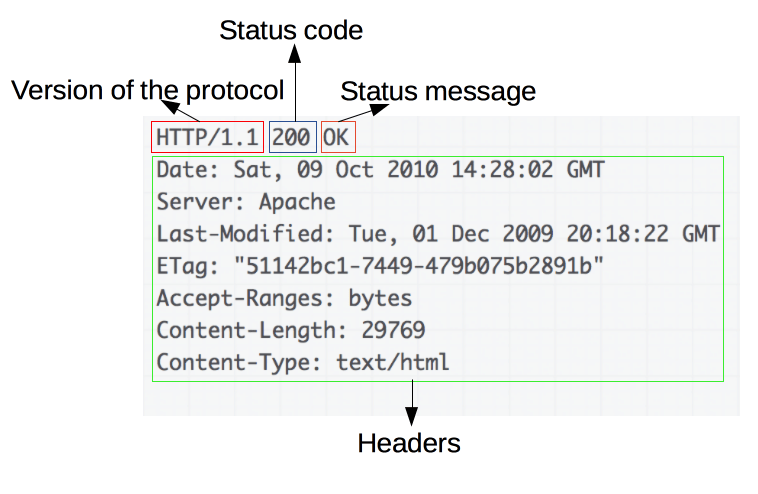

IMPORTANT NOTE: The HTTP/1.1 connector is now deprecated. Use the Coyote HTTP/1.1 Connector instead. Hp universal print driver download.

IMPORTANT NOTE: This connector MUST NOT be used in conjunction with any Context element that has the allowLinking attribute set to true. In this configuration it is possible for a mal-formed request to result in source code exposure of JSPs.

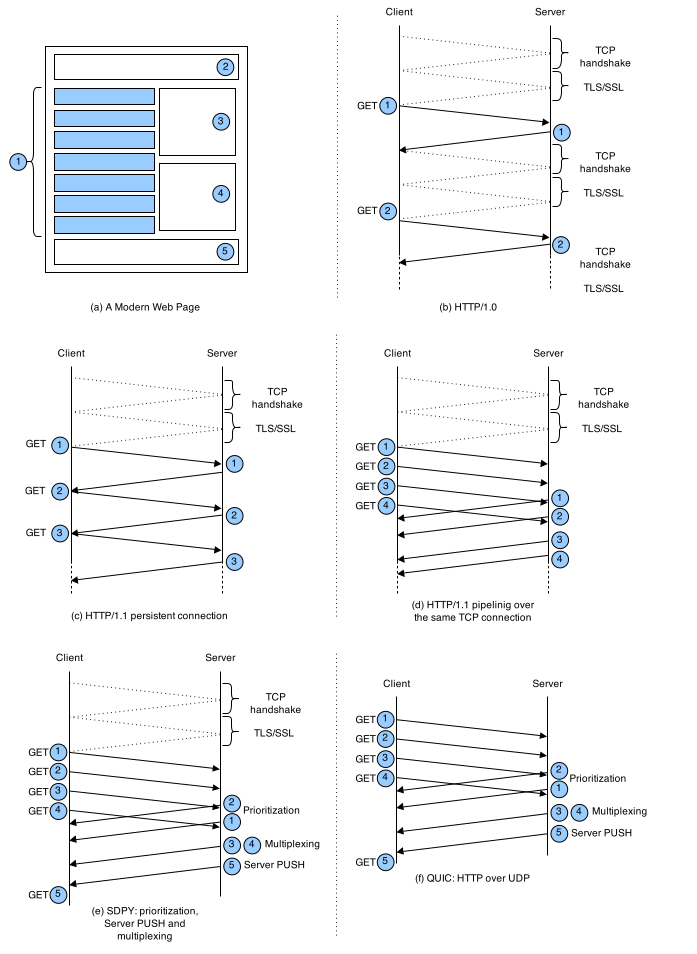

The HTTP/1.1 Connector element represents a Connector component that supports the HTTP/1.1 protocol. It enables Catalina to function as a stand-alone web server, in addition to its ability to execute servlets and JSP pages. A particular instance of this component listens for connections on a specific TCP port number on the server. One or more such Connectors can be configured as part of a single Service, each forwarding to the associated Engine to perform request processing and create the response.

If you wish to configure the Connector that is used for connections to web servers using the WARP protocol (such as the mod_webapp connector for Apache 1.3), see here instead.

At server startup time, this Connector will create a number of request processing threads (based on the value configured for the minProcessors attribute). Each incoming request requires a thread for the duration of that request. If more simultaneous requests are received than can be handled by the currently available request processing threads, additional threads will be created up to the configured maximum (the value of the maxProcessors attribute). If still more simultaneous requests are received, they are stacked up inside the server socket created by the Connector, up to the configured maximum (the value of the acceptCount attribute. Any further simultaneous requests will receive 'connection refused' errors, until resources are available to process them.